2020 Altcoin Node Sync Tests

As I’ve noted many times in the past, running a fully validating node gives you the strongest security model and privacy model that is available to Bitcoin users; the same holds true for altcoins. As such, an important question for any peer to peer network is the performance and thus cost of running your own node. Worse performance requires higher hardware costs, which prices more users out of being able to attain the sovereign security model enabled by running your own node.

The computer I use as a baseline is high-end but uses off-the-shelf hardware. I bought this PC at the beginning of 2018. It cost about $2,000 at the time.

Just set up a maxed out @PugetSystems PC on PureOS 8.0.

— Jameson Lopp (@lopp) February 11, 2018

Core i7 8700 3.2GHz 6 core CPU

32 GB DDR4-2666

Samsung 960 EVO 1TB M.2 SSD

Synced Bitcoin Core 0.15.1 (w/maxed out dbcache) in 162 min w/peak speeds of 80 MB/s. Next step: see how much traffic I can serve on gigabit fiber. pic.twitter.com/QOvuwPgCgy

Note that no node implementation I've come across strictly fully validates the entire blockchain history by default. As a performance improvement, most of them don’t validate signatures or other state changes before a certain point in time, usually a year or two ago. This is done as a trick to speed up the initial blockchain sync, but can make it harder to measure the actual performance of the node syncing since it's effectively skipping an arbitrary amount of computations. As such, for each test I configure the node so that it is forced to validate the entire history of the blockchain. Here are the results!

Geth

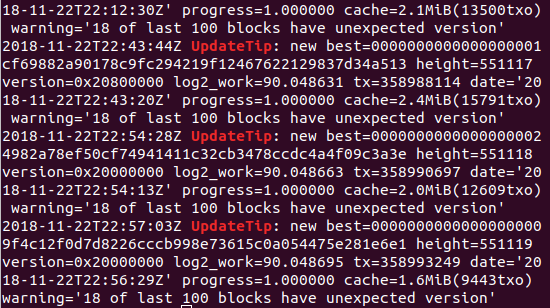

Synced Geth 1.9.9 with the following config values:

[Eth]

NetworkId = 1

SyncMode = "full"

NoPruning = false

DatabaseCache = 26000

It took my benchmark machine 5 days 15 hours to full sync Geth 1.99 to block 9,330,000. It performed over 6 TB of disk reads and 5 TB of disk writes, was disk IO-bound the entire time.

— Jameson Lopp (@lopp) January 22, 2020

When I tested the Geth 1.8.19 release in December 2018 it took 4 days, 6 hours to get to block 6850000.

During this test, Geth 1.9.9 release took 2 days, 16 hours to reach block 6850000.

In terms of pure performance this looks like a significant 37% increase in syncing throughput, which is impressive. However, it's not quite enough to offset the relentlessly increasing amount of data that needs to be validated.

The Ethereum network has a cumulative total of 614M (standard) transactions confirmed during its 5 years of existence while Bitcoin has confirmed 500M transactions. But due to its increased complexity, Ethereum requires significantly more operations to verify transactions. This is why Bitcoin Core can verify 500M transactions in 7 hours on my machine while Geth took 135 hours, which makes Geth about 15 times slower in terms of transaction validation performance.

Parity

Synced Parity 2.6.8 with the following config values:

[parity]

auto_update = "none"

[network]

warp = false

[footprint]

pruning = "fast"

cache_size = 26000

[snapshots]

disable_periodic = true

It took my benchmark machine 9 days 2 hours to full sync Parity 2.6.8 to Ethereum block 9,390,000. It performed over 47 TB of disk reads and 42 TB of disk writes, was disk IO-bound the entire time.

— Jameson Lopp (@lopp) February 1, 2020

This is a pretty insane amount of disk operations! A year after my last test and it has more than doubled the sync time and operations required.

Full validation sync of @ParityTech 2.1.3 now takes 5,326 minutes (3.7 days) on this machine. I increased cache to 24GB RAM and it peaked at 23GB. Disk I/O is the bottleneck, with over 22TB read and 20TB written in total. 5X longer only 8 months later.https://t.co/N1hBLRMlN6

— Jameson Lopp (@lopp) October 28, 2018

An interesting point to note is that the node actually wouldn't sync at all when I tried to start it with the above config values! The first time I started it up it didn't connect to a single peer even after several hours. I then noticed that it had set my node ID to one at my internal IP address, so I restarted with my external IP address explicitly set but I had the same problem. Then I downgraded to 2.5.13, the stable release and it exhibited the same issue. After asking several folks and eventually ending up on the parity gitter, it was recommended that I manually set a reserved_peers file to force parity to connect to known healthy nodes that I found via ethernodes.org.

I was also informed that parity was currently incompatible with geth bootnodes because parity didn't support EIP 2364, which I'm sure didn't help. This experience was disappointing to say the least; it leads me to question if the parity implementation is being well maintained.

In terms of my historical syncing tests on this benchmark machine:

Parity 1.9.3 on Feb 26, 2018 took 17 hours, 10 minutes.

Parity 2.1.3 on Oct 28, 2018 took 3 days, 16 hours, 46 minutes.

Parity 2.6.8 on Jan 22, 2020 took 9 days, 2 hours

It's pretty clear that Geth is doing a better job of fighting back against ever-increasing sync times while Parity's current trajectory will put it in multi-week sync territory in another year. It's worth noting that if you try to perform a full validation sync on a computer with a spinning disk hard drive, it will probably never be able to catch up with the chain tip due to the disk operations required.

Monero

I synced monero 0.15.0.1 with the following values in bitmonero.conf:

max-concurrency=12

fast-block-sync=0

prep-blocks-threads=12

Full validation sync of monero 0.15.0.1 to block 2025045 on my benchmark machine took 1 day 2 hours 40 min. Caching policy is effective; only read 9GB from disk while writing 297GB. Bottleneck appears to be software rather than hardware.

— Jameson Lopp (@lopp) February 2, 2020

Similar to my sync over a year ago, there was no obvious resource bottleneck. Bandwidth usage was low, disk I/O was low, and CPU usage was generally only maxing out one core. Monerod is certainly making good use of RAM for caching because during the entire sync it only read 9GB from disk while writing 297GB. My assumption is that the bottleneck is actually in the code and that the validation of transactions is parallelized to the point that it takes full advantage of all your CPU cores. I also found it odd that throughout the syncing process it was consistently processing 20 blocks per second, which makes me wonder if a bottleneck somewhere was only feeding the CPU that much work.

Full validation sync of @monero 0.13.0.4 in October 2018 on my benchmark machine took 20 hours 16 min, so we've added 6.5 hours to the required sync time over the past year.

Zcash

I synced zcashd 2.1.1 with the following config:

dbcache=26000

par=12

checkpoints=0

zcashd only found 5 peers from its DNS seeds which was concerningly low; it took several minutes before it was able to connect to a peer. I also noticed that it was only using half of my cores; looks like it set the script verification threads to the number of physical rather than logical cores, so I bumped that up to 12.

It took my benchmark machine 3 hours & 30 min to do a full validation sync of zcash 2.1.1 to block 713,000. Caching helped significantly as zcashd only read 13MB from disk while writing 26GB.

— Jameson Lopp (@lopp) February 1, 2020

Looks like due to being able to keep entire UTXO set in cache it was able to complete syncing while only reading 13MB from disk and writing 26 GB. The process was CPU-bound the whole time.

When I synced zcash 2.0.1 in October 2018 it took my machine 6 hours 11 minutes, so we can see that it has implemented significant performance improvements to the tune of more than a 50% speedup. My speculation is that they likely merged in the performance improvements from upstream (Bitcoin Core) that have been implemented over the years since they forked the repository in 2016.

In Conclusion

Every crypto asset network has its own unique properties and often its own perspective around the consensus of the participants with regard to acceptable trade-offs for the properties of the system. The cost of node operation is just one of the many properties of these systems; it's worth tracking over time so that stakeholders in these systems can make informed decisions about how to prioritize future development.