2019 Bitcoin Node Performance Tests

Testing full validation syncing performance of seven Bitcoin node implementations.

As I’ve noted many times in the past, running a fully validating node gives you the strongest security model and privacy model that is available to Bitcoin users. A year ago I ran a comprehensive comparison of various implementations to see how well they performed full blockchain validation. Now it's time to see what has changed over the past year!

The computer I use as a baseline is high-end but uses off-the-shelf hardware. I bought this PC at the beginning of 2018. It cost about $2,000 at the time.

Just set up a maxed out @PugetSystems PC on PureOS 8.0.

— Jameson Lopp (@lopp) February 11, 2018

Core i7 8700 3.2GHz 6 core CPU

32 GB DDR4-2666

Samsung 960 EVO 1TB M.2 SSD

Synced Bitcoin Core 0.15.1 (w/maxed out dbcache) in 162 min w/peak speeds of 80 MB/s. Next step: see how much traffic I can serve on gigabit fiber. pic.twitter.com/QOvuwPgCgy

Note that no Bitcoin implementation strictly fully validates the entire chain history by default. As a performance improvement, most of them don’t validate signatures before a certain point in time, usually a year or two ago. This is done under the assumption that if a year or more of proof of work has accumulated on top of those blocks, it’s highly unlikely that you are being fed fraudulent signatures that no one else has verified, and if you are then something is incredibly wrong and the security assumptions underlying the system are likely compromised.

For the purposes of these tests I need to control as many variables as possible, and some implementations may skip signature checking for a longer period of time in the blockchain than others. As such, the tests I'm running do not use the default settings - I change one setting to force the checking of all transaction signatures and I often tweak other settings in order to make use of the higher number of CPU cores and amount of RAM on my machine.

Bcoin master (commit 77d8804)

Full validation sync of @Bcoin master commit 77d8804 to block 601,300 completed in 18 hours, 29 minutes. 😲

— Jameson Lopp (@lopp) November 4, 2019

If you read last year's report you may recall that I struggled with Bcoin and had to try syncing about a dozen different times before I found the right parameters that wouldn’t cause the NodeJS process to blow heap and crash. In the past year the Bcoin team has made a number of improvements such as rewriting the block storage and removing the UTXO cache because they discovered it actually decreased syncing performance.

My bcoin.conf:

checkpoints:false

cache-size:10000

sigcache-size:1000000

max-files:5000

Bitcoin Core 0.19

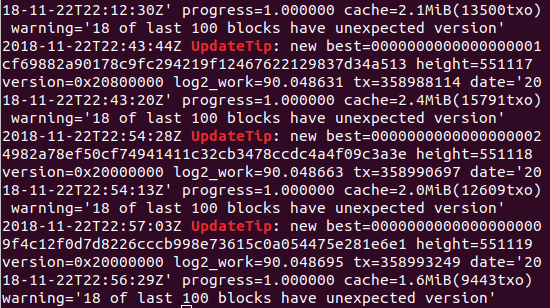

It took 399 minutes to sync Bitcoin Core 0.19 from genesis to block 601,300 with assumevalid=0 on my benchmark machine. Bottleneck continues to be CPU.

— Jameson Lopp (@lopp) October 30, 2019

Testing Bitcoin Core was straightforward as usual; once again the bottleneck was definitely CPU. I don't expect Core to get much faster because they've squeezed about as much performance as possible out of the software. My bitcoind.conf:

assumevalid=0

dbcache=24000

maxmempool=1000

btcd v0.20.0-beta

Full validation sync of btcd v0.20.0-beta to block 601,300 took my benchmark machine 3 days, 3 hours, 12 minutes. 👏CPU-bound the entire time.

— Jameson Lopp (@lopp) November 7, 2019

BTCD was basically a dead project that hadn't seen a release in the 4 years since the Conformal devs abandoned it to work on Decred. Olaoluwa Osuntokun (roasbeef) has taken the reins as primary maintainer, probably because he used to work on it years ago and now lnd uses btcd as a library. Looks like they've added better stall detection and faster pubkey parsing since last year's test. Syncing a year more of data in less time is a pretty massive improvement!

My btcd.conf:

nocheckpoints=1

sigcachemaxsize=1000000

Gocoin 1.9.7

Full validation sync of Gocoin 1.9.7 with secp256k1 library enabled took my machine 19 hours, 56 minutes to get to block 601,300. Bottleneck is unclear; CPU usage stayed steady at 50% and no other resources were maxed out.

— Jameson Lopp (@lopp) October 30, 2019

After reading through the documentation I made the following changes to get maximum performance:

- Installed secp256k1 library and built gocoin with sipasec.go

- Disabled wallet functionality via

AllBalances.AutoLoad:false

My gocoin.conf:

LastTrustedBlock: 00000000839a8e6886ab5951d76f411475428afc90947ee320161bbf18eb6048

AllBalances.AutoLoad:false

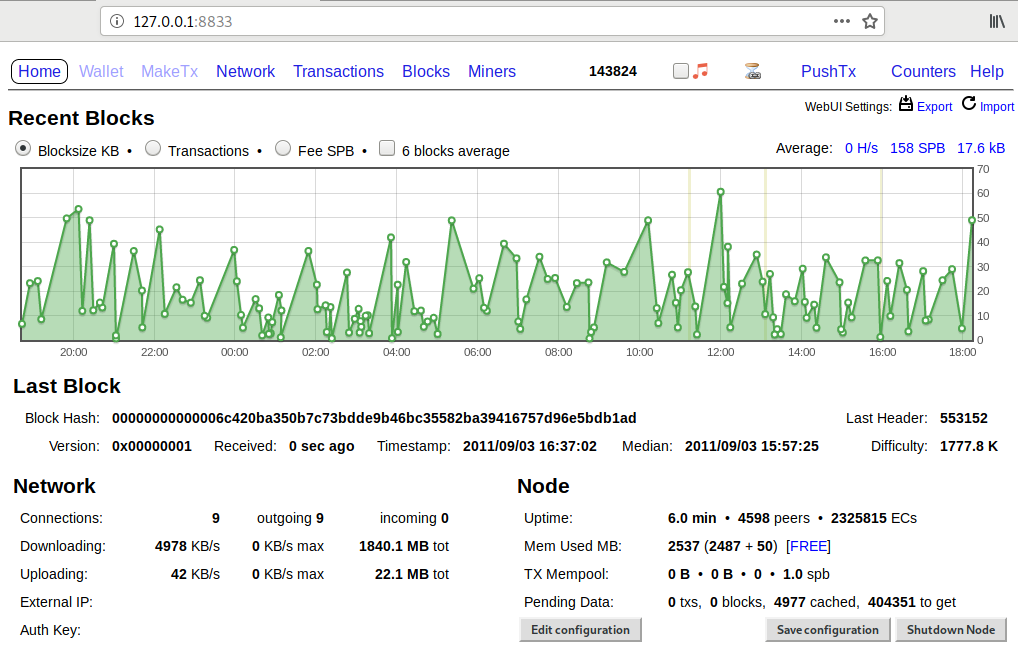

UTXOSave.SecondsToTake:0

Gocoin is still fairly fast with respect to the competition, but I don't think it synced as fast as it could have. My CPU usage was at about 20% during the early blocks and usage never went above 50%; I suspect that this may be due to not taking advantage of hyperthreading - my machine has 6 physical but 12 virtual CPUs. I tried increasing the number of peers from 10 to 20 and upgraded go from 1.11 to 1.13 but neither had much of an effect. On the bright side, Gocoin still has the best admin dashboards of any node.

Libbitcoin Node 3.6.0

Full validation sync of Libbitcoin Node 3.6.0 to block 601,300 took my machine 27 hours, 37 minutes with a 100,000 UTXO cache.

— Jameson Lopp (@lopp) November 3, 2019

Libbitcoin Node wasn’t too bad — the hardest part was removing the hardcoded block checkpoints. In order to do so I had to clone the git repository, checkout the “version3” branch, delete all of the checkpoints other than genesis from bn.cfg, and run the install.sh script to compile the node and its dependencies.

My libbitcoin config:

[blockchain]

# I set this to the number of virtual cores since default is physical cores

cores = 12

[database]

cache_capacity = 100000

[node]

block_latency_seconds = 5

I noted that during the course of syncing, Libbitcoin Node wrote 3 TB and read over 30TB to and from disk. Total writes looks reasonable but the total reads are much higher than with other implementations. Seems there's probably more caching improvements that could be made; it was only using 5 GB of RAM by the end of the sync. There were also a number of short pauses where it didn't seem to be doing any work; presumably the queue of blocks to process was starving from time to time.

Parity Bitcoin master (commit 7fb158d)

Full validation sync of Parity Bitcoin (commit 7fb158d) to block 601,300 on my benchmark machine took 2 days, 2 hours, 10 minutes.

— Jameson Lopp (@lopp) November 10, 2019

Parity is an interesting implementation that seems to have the bandwidth management figured out well but is still lacking on the CPU and disk I/O side. CPU usage stays below 50%, possibly due to lack of hyperthreading support, but it was using a lot of the cache I made available — 21GB out of the 24GB I allocated! The real bottleneck appears to be disk I/O - for some reason it was constantly churning 100 MB/s in disk reads and writes even though it was only adding about 2 MB/s worth of data to the blockchain. It's weird that this much disk activity is being performed despite the 21GB of cache.

There are probably some inefficiencies in Parity’s internal database (RocksDB) that are creating this bottleneck. I’ve had issues with RocksDB in the past while running Parity’s Ethereum node and while running Ripple nodes. RocksDB was so bad that Ripple actually wrote their own DB called NuDB. My pbtc config:

— btc

— db-cache 24000

— verification-edge 00000000839a8e6886ab5951d76f411475428afc90947ee320161bbf18eb6048

Stratis 3.0.5

Syncing the @stratisplatform Bitcoin node has been pretty disappointing. After 4 days, 3 hours it only reached block 428,002 and then crashed due to running out of disk space. Turns out the node doesn't delete UTXOs from disk once they're spent. 77GB of blocks and 632GB of UTXOs!

— Jameson Lopp (@lopp) November 2, 2019

Stratis is an altcoin that shares many of Bitcoin's attributes, though it's a proof of stake blockchain that's meant to be a platform for other blockchains via sidechains. Stratis built their own full node implementation that's written in C# - it supports both Stratis and Bitcoin.

My Stratis 3.0.5 bitcoin.conf:

assumevalid=0

checkpoints=0

maxblkmem=1000

One thing I also noticed is that it looks like the UTXO cache is hard coded to 100,000 UTXOs - if there was a way to increase this you could get a bit more performance improvement. On the bright side, I requested this feature and the Stratis devs added it an hour later! However, CPU was the bottleneck by far - once it got to block 300,000 the processing slowed down to just a couple blocks per second even though the queue of blocks to be processed was full and there was little disk activity. There were many times when only 1 of my 12 cores was being used, so it looks like there is plenty of room for parallelization improvement.

Unfortunately, a critical unresolved issue is that their UTXO database doesn't seem to actually delete UTXOs and you'll need several terabytes of disk space in comparison to most Bitcoin implementations today that use under 300GB. As such, I was unable to complete the sync because my machine only has a 1 TB drive.

Performance Rankings

- Bitcoin Core 0.19: 6 hours, 39 minutes

- Bcoin 77d8804: 18 hours, 29 minutes

- Gocoin 1.9.7: 19 hours, 56 minutes

- Libbitcoin Node 3.2.0: 1 day, 3 hours, 37 minutes

- Parity Bitcoin 7fb158d: 2 days, 2 hours, 10 minutes

- BTCD v0.20.0-beta: 3 days, 3 hours, 12 minutes

- Stratis 3.0.5.0: Did not complete; definitely over a week

Delta vs Last Year's Tests

We can see that most implementations are taking longer to sync, which is to be expected given the append-only nature of blockchains. Note that the total size of the blockchain has grown by 30% since my last round of tests, thus we would expect that an implementation with no new performance improvements or bottlenecks should take ~30% longer to sync.

- Bcoin 77d8804: -9 hours, 2 minutes (44% shorter)

- BTCD v0.20.0-beta: -20 hours (27% shorter)

- Bitcoin Core 0.19: +1 hour, 28 minutes (28% longer)

- Parity Bitcoin 7fb158d: +11 hours, 53 minutes (31% longer)

- Libbitcoin Node 3.2.0: +7 hours, 13 minutes (35% longer)

- Gocoin 1.9.7: +7 hours, 24 minutes (59% longer)

As we can see, bcoin and btcd have made performance improvements while it appears that Gocoin has some sort of bottleneck that is degrading performance as the blockchain grows.

Exact Comparisons Are Difficult

While I ran each implementation on the same hardware to keep those variables static, there are other factors that come into play.

- There’s no guarantee that my ISP was performing exactly the same throughout the duration of all the syncs.

- Some implementations may have connected to peers with more upstream bandwidth than other implementations. This could be random or it could be due to some implementations having better network management logic.

- Not all implementations have caching; even when configurable cache options are available it’s not always the same type of caching.

- Not all nodes perform the same indexing functions. For example, Libbitcoin Node always indexes all transactions by hash — it’s inherent to the database structure. Thus this full node sync is more properly comparable to Bitcoin Core with the transaction indexing option enabled.

- Your mileage may vary due to any number of other variables such as operating system and file system performance.

Conclusion

Given that the strongest security model a user can obtain in a public permissionless crypto asset network is to fully validate the entire history themselves, I think it’s important that we keep track of the resources required to do so.

We know that due to the nature of blockchains, the amount of data that needs to be validated for a new node that is syncing from scratch will relentlessly continue to increase over time. Thus far the tests I run are on the same hardware each year, but on the bright side we do know that hardware performance will also continue to increase each year.

It's important that we ensure the resource requirements for syncing a node do not outpace the hardware performance that is available at a reasonable cost. If they do, then larger and larger swaths of the populace will be priced out of self sovereignty in these systems.