The Death of Decentralized Email

Note: if you'd prefer to consume this essay as a presentation, you can find it here.

It is said that those who do not learn from history are doomed to repeat it. I believe it is of utmost importance that proponents of decentralized protocols learn from the failures of those that have come before. The following is a review of 40 years of history for the protocol that is the foundation of email.

One of the oldest mass-adopted internet protocols in existence is SMTP - Jonathan Postel published RFC 821 which defined how it worked in 1982.

SMTP stands for “Simple Mail Transfer Protocol” - it's the standard for how to send email between two machines. SMTP has proven itself to be quite successful and is used by billions of people every day.

My Background

You may know me as a Bitcoin educator and engineer. But my previous career involved working on software and infrastructure for an email marketing company called Bronto Software. When I started working at Bronto our clients sent a few hundred thousand emails a day out through our servers. By the time I left a decade later, that number was over 100 million emails per day.

I had a front row seat to the challenges of scaling email infrastructure to such massive levels. What I learned was that email deliverability is an incredibly complex problem that could only be partially solved by technical means. As our company scaled, we ended up having to hire full-time "deliverability specialists" who where not engineers - they were relationship managers who maintained professional networks and lines of communication with other major players in the email ecosystem such as ISPs and spam blocklists.

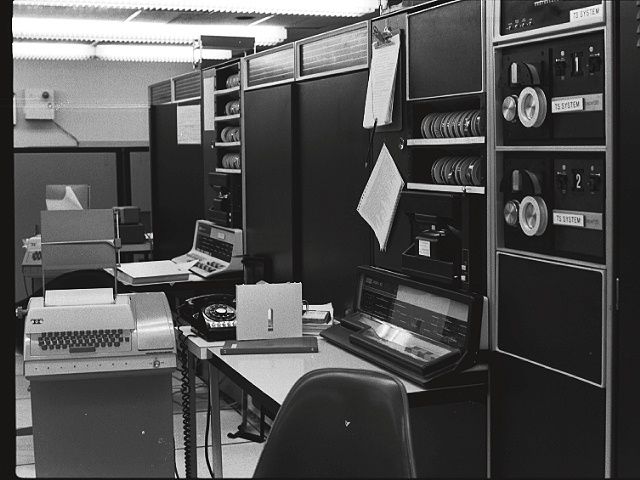

The Genesis of SMTP

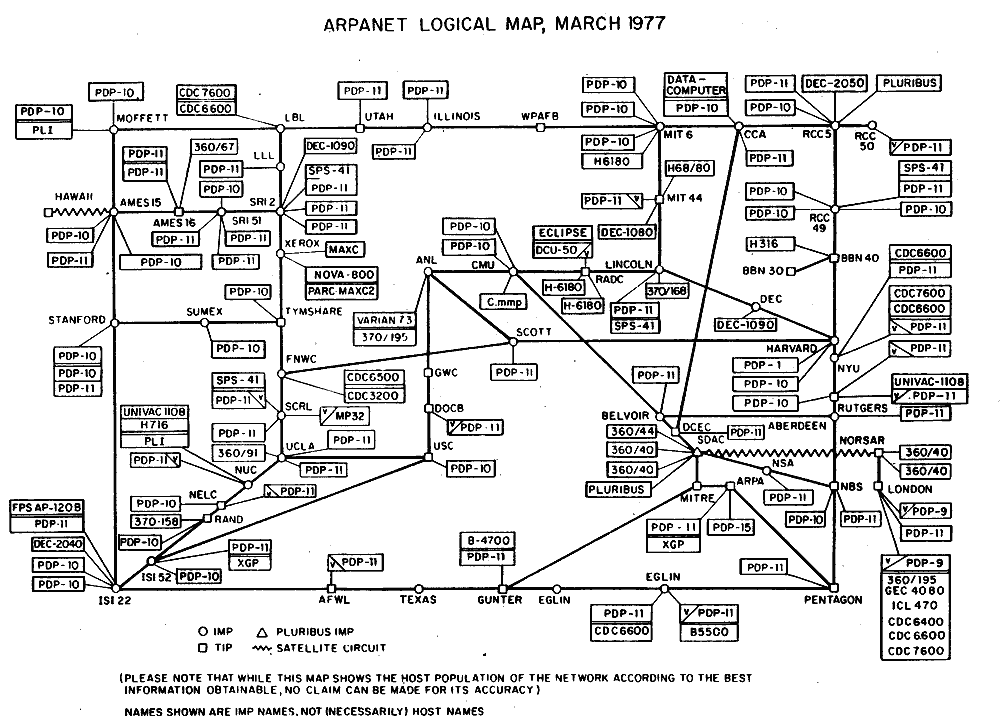

In the 1980s the Internet had not yet been commercialized and it was mostly comprised of military installations, universities, and corporate research laboratories. Connections were slow and unreliable, and the number of servers was small enough that all of the participants could recognize each other.

This is a map of Arpanet at the time, the predecessor to the Internet.

In this setting SMTP’s emphasis on reliability instead of security was reasonable and helped contribute to its fast adoption. Many system admins helped each other out by configuring their mail servers as open relays. That meant that they would accept mail meant to be delivered to users of other systems and relay it toward its final destination. Thanks to this cooperation, email transfer on the fledgling internet stood a reasonable chance of eventual delivery. Server administrators were generally happy to help their peers and receive help in return.

In other words, despite originally being designed as a communications network that could survive nuclear attack, the early internet was not an adversarial network. Due to the difficulty and cost of getting connected to the network, administrators tended to act altruistically.

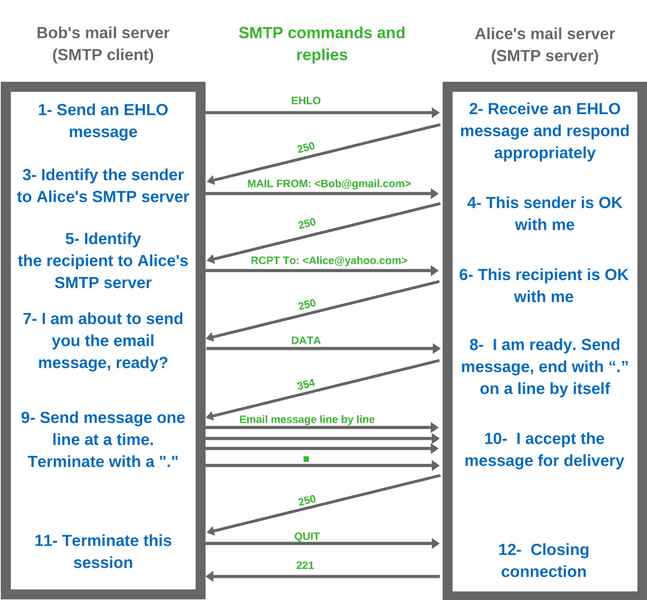

Here we can see that SMTP is a client-server protocol with a fairly straightforward back-and-forth regarding the messages that are expected to be sent.

SMTP originally only supported unauthenticated, unencrypted ASCII text connections, which are vulnerable to man-in-the-middle attack, spoofing, and spam.

Early email was treated the same regardless of its content and every email user was treated equally. As long as you followed the rules of the protocol, you could expect your message to reach its destination.

ESMTP

In November 1995, RFC 1869 defined the Extensible Simple Mail Transfer Protocol (ESMTP), which established a general architecture for current and future extensions intended to add features missing from the original SMTP.

ESMTP defines the consistent and manageable means by which ESMTP clients and servers can be identified and servers can indicate supported extensions. Messaging and SMTP-AUTH were introduced in 1998 and 1999, both of which changed the nature of email delivery.

Originally, SMTP servers were internal to an organization, receiving mail for the organization from outside, and transmitting messages from the organization to the outside. But over time, SMTP servers were, in practice, expanding their roles to become message sending agents for mail user agents, some of which were now transmitting mail from outside the organization.

This problem, as a result of the rapid expansion and popularity of the World Wide Web, meant that SMTP needed specific rules for relaying mail and authenticating users to prevent relaying unsolicited email.

Work on sending messages originally began because popular mail servers often rewrite mail in an attempt to fix problems with it, for example, adding a domain name to an unqualified address.

This behavior is useful when the message being repaired is an initial transmission, but is dangerous and harmful when the message appears elsewhere and is relayed. The clear separation of mail-to-serve and relay was seen as a way to allow and encourage rewrites of submissions while prohibiting re-write relaying.

As spam became more prevalent, this new paradigm was seen as a way to provide permission to send mail from an organization, as well as traceability.

Spam

The first instance of spam dates back to 1978, when Gary Thurek sent an unsolicited marketing message to hundreds of ARPANET users. The message promoted a new product by Digital Equipment Corporation; Thurek claims that this historic email netted $13 million in sales.

However, the resulting outcry from early email users was sufficient to dissuade others from repeating this tactic for many years. This changed in the 1990s: millions of individuals discovered the internet and signed up for inexpensive personal accounts and advertisers found a large and willing audience they could reach cheaply through this new channel.

The First Walls Go Up

The helpful nature of open relays was among the first victims of the spam influx. In the early mainstream internet, broadband connections were prohibitively expensive for all but the largest entities. Spammers quickly learned it was trivial to send a few messages with recipient lists thousands of entries long to helpful corporate servers, which would happily relay them. System admins noticed spikes in their service bills and in the number of complaints from users. It didn't take long to realize that they could no longer help their peers without incurring significant costs and ill will.

The SMTP standard, which was designed with reliability as a key feature, had to be modified to purposefully discard certain messages. This was a foreign concept and no one was sure how to proceed. Closing the open relays seemed to be a simple first step. Administrators argued passionately, and at great length, about whether this was a necessary change, or even a good idea at all. In the end though, it was universally agreed that the trusting nature of the old internet was dead, and it was insane to ignore the new reality.

Some admins took the restriction idea a step further and decided that they would not only close their own relays, but as another line of defense they would no longer accept messages from any open relays. As this tactic became best practice, admins began to share their lists of those relays by adding specially formatted entries to their domain name servers, thus allowing anyone to request this data. Thus began the era of DNS blackhole lists, which were highly controversial. For example, admins debated if it was acceptable to actively test remote servers to see if they were operating open relays. They further discussed which procedures a system administrator should follow to request removal of their server from a blacklist after disabling open relaying.

One of the earliest blacklists was the Mail Abuse Prevention System (MAPS.) The acronym MAPS is spam spelled backwards. Being certain there was an absolute right to publish an anti-spam blacklist, MAPS published a "How to Sue Us" page, inviting spammers to help them create case law. In 2000 MAPS was the named defendant in no fewer than three lawsuits, being sued by Yesmail, Media3, and survey giant Harris Interactive. In 2001 the company implemented yet another roadblock for admins by requiring a subscription to access the blacklists.

MAPS' purpose was to educate users to relay mail through an acknowledged ISP instead of running their own mail servers. Doing this would bring various advantages and disadvantages. ISPs can generally afford to monitor their systems more thoroughly in order to avoid viruses, hijackers, and other threats. Furthermore, it paved the way for effectively exploiting policies like SPF, which rely upon SMTP authentication in order to block email address abuse. In addition, ISP email relays are incompatible with fine-grained IP address blocking: if they relay spam and get blocked, it impacts all of the ISP's customers.

The first victims of collateral damage from blacklisting efforts were those whose mail servers were blocked through no fault of their own. This happened when over-zealous or lazy blacklist operators added entire blocks of IP addresses to their lists, rather than only targeting the specific offending addresses. Some blacklist admins argued that the lists should err on the side of caution to prevent these problems, while others believed that this would put extra pressure on the open relay administrators to cease operations.

2003: The CAN-SPAM Act

The United States set standards for regulating commercial email with legislation called Controlling the Assault of Non-Solicited Pornography And Marketing – better known as the CAN-SPAM Act. President George W. Bush signed it into law in 2003, 32 years after email’s invention.

Some critics say the law didn’t go far enough. CAN-SPAM makes it a misdemeanor to spoof a “From” field and requires a way for people to opt out. However, the law did not make it illegal to send unsolicited email marketing messages. That prompted some to call it the “You-Can-Spam” act.

Over the next 20 years other laws were passed such as Canada’s Anti-Spam Legislation (CASL) and Europe’s General Data Protection Regulation (GDPR).

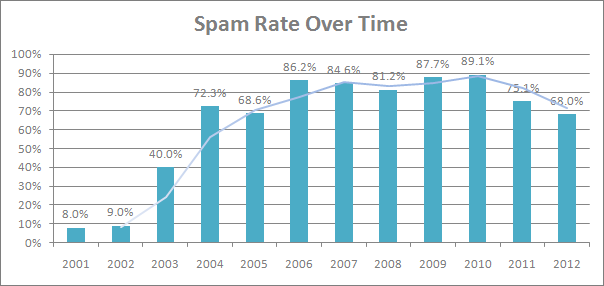

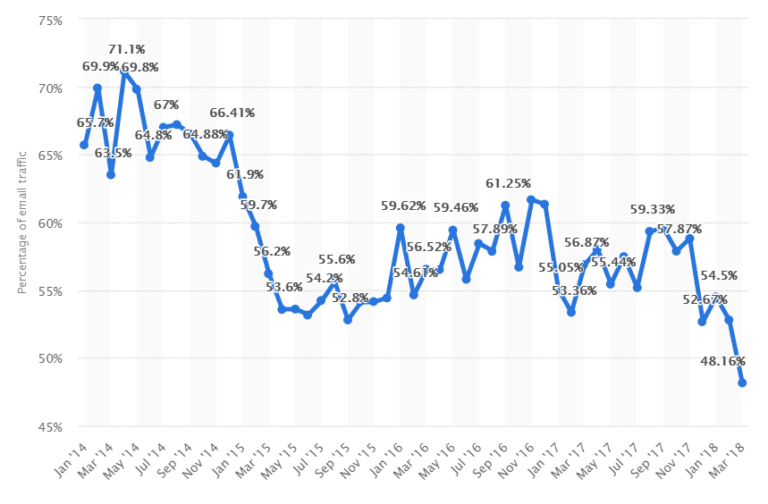

It's unclear how much these laws have actually helped. Due to the international nature of the internet it can be hard to prosecute criminals who are operating out of other countries. I've only been able to find a couple dozen prosecutions against spammers and the volume of unsolicited email has remained over 50% for most of the past 20 years.

Measuring the Effectiveness of Anti-Spam Policies

It's hard to get consistent, much less accurate, statistics of the prevalence of spam. It's hard to track given the peer to peer nature of email, and even providers who have tracked it tended to only do so for a period of several years.

The mid 2000s were when botnets came of age, spiking the volume of spam to over 80% of all email, peaking at 89% in 2010.

In 2011 the spam rate dropped to 75%. The decline was the result of the takedown of three massive botnets as well as unrest among pharmaceutical spam-sending gangs.

Significant declines came again in 2015, but mostly because of a trend of criminal gangs switching from spamming to malware and ransomware. As you can see, it's a never-ending battle. Even today it’s estimated that over 50% of all email is spam.

The Content Filtering Era

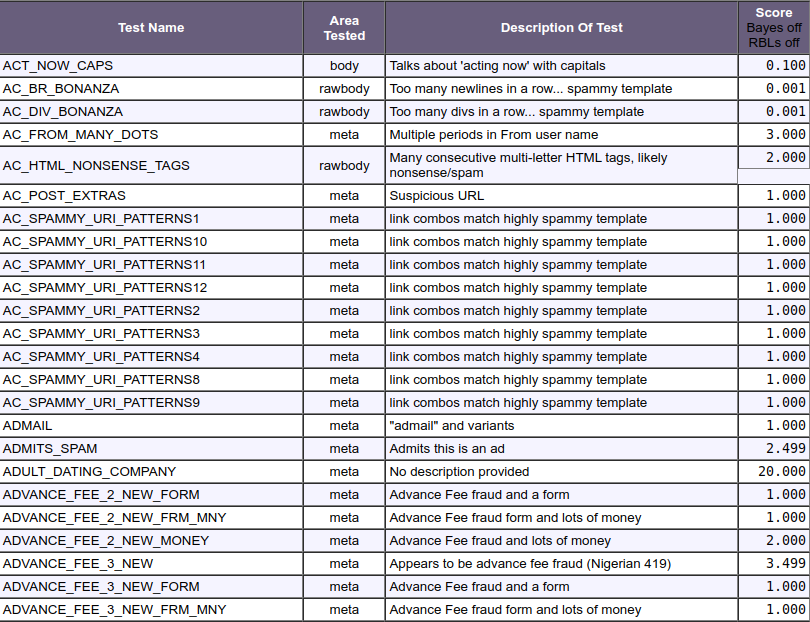

With email abuse rising, ISPs had to take measures to avoid cluttering their customers’ mailboxes with unwanted, irrelevant emails. To counter the increasing number of (supposed) spam, ISPs started to use crude filters based on keywords, patterns or special characters. Over time this evolved into more complex bayesian filters. Spamassassin was the most widely adopted project with over a thousand rules.

While partially effective, these measures were still inaccurate and caused many false positives – emails were filtered or blocked even though they were perfectly fine and actually wanted by recipients. ISPs tried their best to improve matters by guessing what their users actually wanted in their inbox, but early attempts to create accurate filters failed spectacularly.

More Walls Are Erected

While open relays died off in the 90s, providers generally scraped by with content based filters for the next decade. But as high speed residential internet connections became more readily available, spammers wrote malware to infect computers on residential ISP networks, as most ignorant users wouldn't even know their machines had joined a botnet. Shortly thereafter, many ISPs started completely blocking traffic on port 25, which is the default port for SMTP. This was the only way they could figure to curtail the flood of spam.

Proof of Reputation

The fact that email spam filtering was so inaccurate led ISPs to offer channels of communication to senders and ESPs (email service providers.) This, in turn resulted in whitelisting for legitimate senders, to get around the limitations of the static, content-based spam filters. It was the beginning of the “postmaster departments” at ISPs. This worked pretty well for a while but of course this new way to get senders to deliver emails was not bulletproof. Misuse continued as the ISPs often still didn’t have enough data to prove the claims of their senders to be legitimate and their emails to be desired by recipients. Having good ‘ISP relations’ became crucial because now it was human decisions that determined if your emails were blocked, sent to the spam folder, or delivered to the inbox.

The next major development in email deliverability included the standardization of reputation scores. ‘SenderScore’ and other reputation systems emerged at this time. False positives were further reduced by adding sender authentication mechanisms like Sender Policy Framework, SenderID, and Domainkeys Identified Mail.

Of course, if you're familiar with how these technologies work then you'll notice that most of them are reliant upon centralized gatekeepers who assign IP addresses and control domain registrations. None of these actually provide email senders with any sovereignty.

The fatal flaw with choosing to implement reputation based delivery filtering is that there was no decentralized online reputation service; as such, this essentially added a highly centralized meta protocol layer on top of the decentralized simple mail transfer protocol.

Centralization

As spam became a bigger problem over the years and the "accepted" solution was reputation, this imposed a high cost upon being able to process the receipt of large volumes of email... this did not scale for end-users. Because the approach to spam prevention was not to seek economic solutions that dissuade high volume senders, this imposed costs upon receivers. The complexity required to maintain reputation lists had to be outsourced to specialists and the increasing cost of sifting through a deluge of incoming mail priced out all but the biggest businesses.

After self-hosting my email for twenty-three years I have thrown in the towel 😩

— Carlos Fenollosa (@cfenollosa) September 4, 2022

Email is now an oligopoly, a service gatekept by a few big companies which does not follow the principles of net neutrality.

As a result, today over over 90% of email users are captured by 5 companies.

Today’s email servers will claim to accept incoming mail but will delete it as soon as it is received, a practice referred to as "blackholing." The email servers operated by tech giants permanently blacklist wide swaths of IP addresses and delete their emails without notice. Their back end infrastructure runs algorithms across huge datasets so that most spam doesn't appear on your inbox.

Does it work? Sure. But it comes at a great cost that few even realize is being paid.

Proof of Engagement

Recent years have seen further changes to email spam filtering and assessing what recipients actually want to receive because now the problem has grown larger than merely unsolicited email. The floods are so great that many people receive more legitimate email than they possibly read.

Instead of relying on their own arbitrary judgement, email services have placed the power firmly in the hands of their users by offering more sophisticated features for managing incoming mail. ISPs have embraced "Big Data" and perform analytics across all the actions of their users to determine which emails are most desirable. This vast amount of data collected for engagement metrics has helped to get rid of false positives almost entirely.

Some engagement examples:

- Moving an email to spam or trash reflects poorly on the sender.

- Keeping an email in the inbox for a long time / replying / forwarding shows positive engagement.

- Adding the sender to an address book is the best indication that their emails are desired.

Of course, while these engagement metrics are statistically sound, they are of no use to an email sender unless they can first jump through all of the hoops previously mentioned in order to actually get delivered to the inbox in the first place.

Anti-Spam Measures

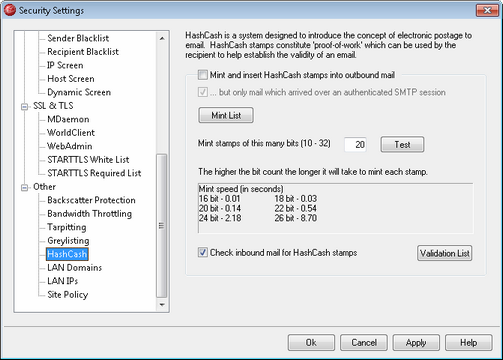

In 1997 Adam Back realized that the root problem of spam was that it was cheap to send. He published the hashcash whitepaper as a solution to impose a cost upon sending email as opposed to receiving it.

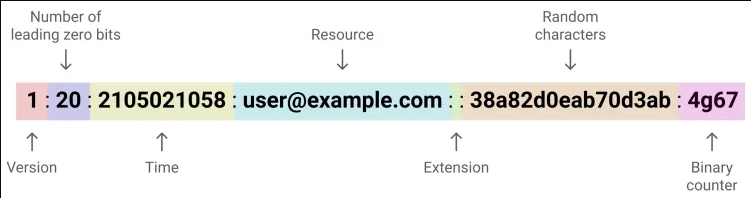

Hashcash is a cryptographic hash-based proof-of-work algorithm that requires a configurable amount of work to solve a given puzzle, but the proof of that computation can be verified instantly by anyone. A hashcash stamp is added to the header of an email to prove the sender has expended CPU cycles calculating the stamp prior to sending the email. In other words, because it is mathematically proven that the sender has taken a certain amount of time to generate the stamp before sending the email, it is unlikely that they are a spammer, because to do so at a high volume would be computationally prohibitive.

A hashcash header looks like this:

When a recipient receives an email, it first checks that the email has the hashcash header. Then it hashes the header and checks that it has the required number of leading zero bits. The recipient only needs to compute the hash once. So it takes far less time to verify a stamp than to find a valid one.

Spammers often optimize the amount of spam they can send delivering messages to hundreds or thousands of recipients simultaneously. However, hashcash requires a separate stamp for each individual recipient, which stops this trick.

SpamAssassin has been able to check for Hashcash stamps since version 2.70, assigning a negative score (i.e. less likely to be spam) for valid, unspent hashcash stamps. However, although the hashcash plugin is on by default, it still needs to be configured with a list of address patterns that must match against the hashcash resource field before it will be used.

Why wasn’t hashcash widely adopted? One reason was that there was a chicken-and-egg problem for it to work; you’d want almost all email users to nearly simultaneously migrate their email clients and servers to start supporting hashcash, otherwise you’d see massive amounts of legitimate emails get rejected for not including valid hashcash headers.

Surveying The Damage

The unfortunate path we’ve taken is at least, in part, due to the fact that the hacky content and reputation filtering solutions could be adopted piecemeal by individual server admins. This resulted in the multi-decade decline as the proverbial frog was slowly boiled in the pot and individual server admins threw up their hands and gave in to the new email overlord tech giants.

What are we left with?

• You can't successfully use a home email server.

• You can't successfully use an email server on a (cloud) VPS.

• You can't successfully use an email server on a bare metal machine in your own datacenter.

At some point your IP address is bound to be banned, either by an IP neighbor sending spam, one of your own users being compromised, or from any number of arbitrary reasons. It's not a matter of "if", but a matter of "when." One wrong move or unfortunate event and you can say goodbye to your deliverability.

These days, if you want to build services that send email, you have to pay an email service provider that has been blessed by others in the industry. We have created an industry of middlemen grifters who are incentivized to continue gatekeeping because it sustains their anti-competitive moat.

As such, it is my distinct displeasure to declare the death of SMTP. The protocol is no longer usable. And as we can see, this devolution occurred organically.

There was no grand conspiracy to capture the network by a malevolent authority.

There was no single inflection point at which everything fell apart.

Rather, we arrived at this point via compounding effects of many minor engineering decisions were made over many years by many different people. Decisions that did not prioritize preserving the low cost of operating your own email service. You could perhaps call it "commercial capture."

I’m ashamed to admit that I was a part of the problem. I didn't give it too much thought at the time, because I wasn’t looking at the big picture… I was merely focused on my job of making our email infrastructure work by any means necessary.

What's This Got to do with Bitcoin?

The following visualization is a thing of beauty. It’s a (slowed down) animation of a bitcoin block being gossiped across the peer to peer network of nodes. This is a bird’s eye view of self governance in a voluntary system.

The key, as we can see, comes down to the cost of the individual user being able to operate as a sovereign entity on a peer to peer network. If the cost of doing so becomes too high then users will outsource operations to third parties and the number of peers will shrink to the point that a handful of entities become gatekeepers and can dictate the governance and rules of the network, even without formally changing the underlying protocol.

This is why, if we are to preserve Bitcoin's valuable properties then we must reject any changes to the ecosystem that substantially raise the cost of using the protocol directly. This includes things like running a fully validating node, securing your wealth, and being able to transact freely. It is not a stretch to imagine similar draconian mechanisms to ring-fence the Bitcoin protocol. We already see it being done under the names anti money laundering and know your customer policies. And you can be sure that any such measures will be marketed to the world as being for our own good.

If you think about it, much of Bitcoin’s scaling debate was a fight against an attempt by large companies that were trying to capture the network and improve the user experience "for our own good." Thus I am optimistic that we can win these battles.

My fellow Bitcoiners: we must remain vigilant and we must push back against the creeping advance of tyranny. If we become complacent, if we settle for convenience over security, we can expect this elegant protocol to morph into a monster.