2022 Bitcoin Node Performance Tests

As I’ve noted many times in the past, backing your bitcoin wallet with a fully validating node gives you the strongest security model and privacy model that is available to Bitcoin users. Four years ago I started running an annual comprehensive comparison of various implementations to see how well they performed full blockchain validation. Now it's time to see what has changed over the past year!

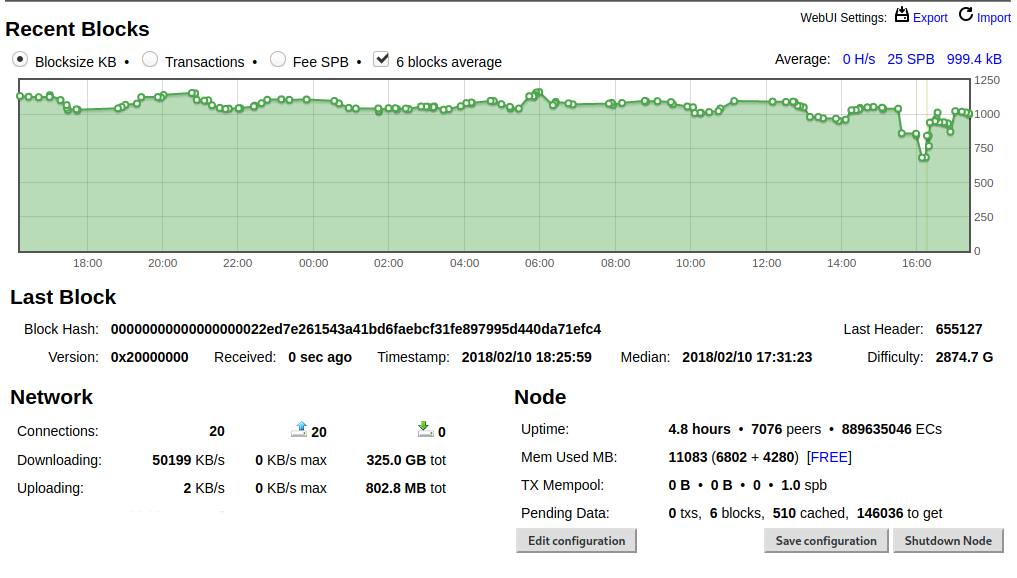

The computer I use as a baseline is high-end but uses off-the-shelf hardware. I bought this PC at the beginning of 2018. It cost about $2,000 at the time.

Just set up a maxed out @PugetSystems PC on PureOS 8.0.

— Jameson Lopp (@lopp) February 11, 2018

Core i7 8700 3.2GHz 6 core CPU

32 GB DDR4-2666

Samsung 960 EVO 1TB M.2 SSD

Synced Bitcoin Core 0.15.1 (w/maxed out dbcache) in 162 min w/peak speeds of 80 MB/s. Next step: see how much traffic I can serve on gigabit fiber. pic.twitter.com/QOvuwPgCgy

Note that no Bitcoin implementation strictly fully validates the entire chain history by default. As a performance improvement, most of them don’t validate signatures before a certain point in time. This is considered safe because those blocks and transactions are buried under so much proof of work. In order for someone to create a blockchain that had invalid transactions before that point in time would cost so much mining resources, it would fundamentally break certain security assumptions upon which the network operates.

For the purposes of these tests I need to control as many variables as possible; some implementations may skip signature checking for a longer period of time in the blockchain than others. As such, the tests I'm running do not use the default settings - I change one setting to force the checking of all transaction signatures and I often tweak other settings in order to make use of the higher number of CPU cores and amount of RAM on my machine.

Also, in order to ensure that the bandwidth of peers on the public network is not a bottleneck and potential source of inconsistency, I now sync between two nodes on my local network.

The amount of data in the Bitcoin blockchain is relentlessly increasing with every block that is added, thus it's a never-ending struggle for node implementations to continue optimizing their code in order to prevent the initial sync time for a new node from becoming obscenely long. After all, if it becomes unreasonably expensive or time consuming to start running a new node, most people who are interested in doing so will chose not to, which weakens the overall robustness of the network.

Last year's test was for syncing to block 705,000 while this year's is syncing to block 760,000. This is a data increase of 17% from 370GB to 434GB. As such, we should expect implementations that have made no performance changes to take about 17% longer to sync than 1 year ago.

What's the absolute best case syncing time we could expect if you had limitless bandwidth and disk I/O? Since you have to perform ~2.48 billion ECDSA verification operations in order to reach block 760,000 and it takes my machine about 4,500 nanoseconds per operation via libsecp256k1... it would take my machine 3.1 hours to verify the entire blockchain if bandwidth and disk I/O were not bottlenecks. Note that last year it took my machine 4,600 nanoseconds per ECDSA verify operation; the secp256k1 library keeps being further optimized.

On to the results!

Bcoin v2.2.0

Full validation sync of @Bcoin v2.2.0 to block 760,000 completed in 1 day, 8 hours, 8 min on my benchmark machine.

— Jameson Lopp (@lopp) November 30, 2022

There was no new release of Bcoin in the past year. Syncing Bcoin 2.2.0 to height 760,000 used:

- 100 MB disk reads

- 4 TB disk writes

- 2.2 GB RAM

My bcoin.conf:

only:<local node IP address>

network:main

checkpoints:false

cache-size:10000

sigcache-size:1000000

max-files:5000

Bitcoin Core 24.0

Full validation sync of Bitcoin Core 24.0 to block 760,000 took my benchmark machine 430 minutes.

— Jameson Lopp (@lopp) November 28, 2022

The full sync used:

- 10 GB RAM

- 160 MB disk reads

- 500 GB disk writes

My bitcoind.conf:

connect=<local node IP address>

assumevalid=0

dbcache=24000

disablewallet=1

Bitcoin Knots 23.0

Full validation sync of Bitcoin Knots 23.0 to block 760,000 took my benchmark machine 7 hours 23 minutes.

— Jameson Lopp (@lopp) December 15, 2022

Bitcoin Knots is effectively Bitcoin Core plus a set of patches, so it's reasonable to expect the performance to be similar. Worth noting that Knots 24.0 was not yet released, so the comparison is not as close as it could be.

The full sync used:

- 10 GB RAM

- 55 MB disk reads

- 460 GB disk writes

- 423 GB download bandwidth

My bitcoind.conf:

assumevalid=0

dbcache=24000

maxmempool=1000

Blockcore 1.1.37

Blockcore is a successor of Stratis, a Bitcoin node implementation that I've never been able to successfully sync to chain tip.

Getting started wasn't great, as I hit 3 different undocumented issues that I had to overcome in order to get the node running.

Full validation sync of Blockcore 1.1.3.7 Bitcoin node on my benchmark machine reached block height 319853 after 19 hours 28 minutes and froze without throwing any warnings or errors.

— Jameson Lopp (@lopp) December 16, 2022

It would seem that Blockcore has not fixed the underlying issue that has been present in the Stratis node implementation for the past 4 years.

My Blockcore bitcoin.conf:

assumevalid=0

checkpoints=0

maxblkmem=1000

maxcoinviewcacheitems=1000000

connect=localNetworkNodeIP:8333

At the time the node froze it had consumed:

13 GB RAM

13 GB disk reads

295 GB disk writes

24 GB bandwidth

btcd v0.23.3

Full validation sync of btcd v0.23.3 to block 760,000 took my benchmark machine 2 days, 19 hours, 27 minutes.

— Jameson Lopp (@lopp) December 4, 2022

Huge speedup this year!

The full sync used:

- 5 GB RAM

- 650 GB disk reads

- 11.3 TB disk writes

BTCD has significantly reduced its disk I/O usage since last year, which probably contributed a bulk of the performance speedup. I noted that it still doesn't really use more than half of the available CPU cycles, so I think there's still some low hanging fruit to grab.

My btcd.conf:

nocheckpoints=1

sigcachemaxsize=1000000

connect=<local node IP address>

Gocoin 1.10.3-pre

Technically, Gocoin has not tagged a release in over 18 months, well before the version I used in last year's test. I compiled commit hash 6960322f...

Full validation sync of Gocoin 1.10.3 (prerelease) took my benchmark machine 7 hours 15 minutes to get to block 760,000.

— Jameson Lopp (@lopp) November 30, 2022

I made several changes to get maximum performance according to the documentation:

- Installed secp256k1 library and built gocoin with sipasec.go

- set several new configs noted below

My gocoin.conf:

LastTrustedBlock: 00000000839a8e6886ab5951d76f411475428afc90947ee320161bbf18eb6048

AllBalances.AutoLoad:false

UTXOSave.SecondsToTake:0

Stats.NoCounters:true

Memory.CacheOnDisk:false

ConnectOnly:<local node IP address>

Gocoin still has the best dashboard of any node implementation.

Gocoin reached block 705,000 in 6 hours 12 min; 30% faster than last year!

Gocoin used:

- 22.4 GB RAM

- 435 GB download bandwidth

- 29 MB disk reads

- 352 GB disk writes

How did Gocoin make such massive improvements?

- 2% improvement from libsecp256k1 changes

- Recommending

Stats.NoCountersto true - Recommending

Memory.CacheOnDiskto false; Gocoin used 50% more RAM for caching - Setting ConnectOnly to a local network peer

Interestingly the last option resulted in Gocoin using half as much bandwidth; last year's sync downloaded 701GB. Presumably this is because it's no longer downloading redundant blocks from multiple peers.

Libbitcoin Server 3.6.0

Full validation sync of Libbitcoin Server 3.6.0 to block 760,000 took my benchmark machine 2 days, 8 hours, 27 minutes.

— Jameson Lopp (@lopp) December 14, 2022

Libbitcoin Server wasn’t too bad — though it has been over 3 years since their last release. They have been working on an overhauled 4.0 release for a while; hopefully it will be ready for next year's tests!

My libbitcoin config:

[network]

peer = <local network node IP address>:8333

outbound_connections = 1

[blockchain]

# I set this to the number of virtual cores since default is physical cores

cores = 12

checkpoint = 000000000019d6689c085ae165831e934ff763ae46a2a6c172b3f1b60a8ce26f:0

[database]

cache_capacity = 100000

During the source of syncing, Libbitcoin Server used:

- 77 TB disk reads

- 5.5 TB disk writes

- 3.4 GB RAM

- 760 GB download bandwidth

Seems there's probably more caching improvements that could be made. While it was using all the CPU cores, they were only hovering around 30%. The bottleneck is disk I/O as I was seeing disk reads hovering in the 150 MB/s to 220 MB/s range. It looks like the reason for the extremely high disk reads is that it very quickly starts to use my hard drive's swap partition for its UTXO cache even though my machine had tons of RAM available.

Mako

Full validation sync of Mako commit hash 6d4d5810... took 23 hours 44 minutes to sync to block 760,000 on my benchmark machine.

— Jameson Lopp (@lopp) December 10, 2022

Mako is a fairly new implementation by Chris Jeffrey that's written in C. In order to create a production optimized build I built the project via:

cmake . -DCMAKE_BUILD_TYPE=Release

And ran it with node -checkpoints=0 -connect=<LAN node> -maxconnections=1

CPU usage was only around 50% - the bottleneck here is clearly disk I/O, as I observed mako constantly writing 100 MB/S. This is because mako has not yet implemented a UTXO cache and thus has to write UTXO set changes to disk every time it processes a transaction.

Unlike last year, Mako no longer creates high load / 10 second pauses on my machine after block 400,000 or so. Looks like Chris fixed the file system flushing issue.

During the course of syncing, Mako used:

- 380 MB RAM

- 230 MB disk reads (95% less than last year)

- 4 TB disk writes (33% less than last year)

- 425 GB download bandwidth

Stratis 1.3.2.4

Full validation sync of Stratis 1.3.2.4 Bitcoin node on my benchmark machine reached block height 399697 after 1 day 6 hours 37 minutes and froze without throwing any warnings or errors.

— Jameson Lopp (@lopp) December 12, 2022

I've been trying to sync Stratis nodes for several years but they've always failed to complete a sync to the tip of the blockchain. One thing I noted this year is that their binary releases do not include the Bitcoin node binary; you have to run it yourself from the source code. The Bitcoin node appears to be a second class citizen that doesn't get much attention by the devs.

My Stratis bitcoin.conf:

assumevalid=0

checkpoints=0

maxblkmem=1000

maxcoinviewcacheitems=1000000

connect=localNetworkNodeIP:8333

At the point the node froze it had consumed:

9 GB RAM

72 GB disk reads

2.1 TB disk writes

I'm marking this as "incomplete" once again. This will likely be the last time I test this implementation since it has failed to sync for 4 years.

Performance Rankings

- Bitcoin Core 24.0: 7 hours, 10 minutes

- Gocoin 1.10.3: 7 hours, 15 minutes

- Bitcoin Knots 23.0: 7 hours, 23 minutes

- Mako 3d8a5180: 23 hours, 44 minutes

- Bcoin 2.2.0: 1 day, 8 hours, 8 minutes

- Libbitcoin Server 3.6.0: 2 days, 8 hours, 27 minutes

- BTCD 0.23.3: 2 days, 19 hours, 27 minutes

- Blockcore 1.1.37: incomplete

- Stratis 1.3.2.4: incomplete

Delta vs Last Year's Tests

Remember that the total size of the blockchain has grown by 17% since my last round of tests, thus we would expect that an implementation with no new performance improvements or bottlenecks should take ~17% longer to sync.

- BTCD 0.23.3: -1 day, 11 hours, 48 minutes (35% shorter)

- Gocoin 1.10.3: -51 minutes (11.5% shorter)

- Mako 3d8a5180: -26 minutes (2% shorter)

- Bitcoin Core 24.0: +17 minutes (4% longer)

- Bcoin 2.2.0: +4 hours, 21 minutes (17% longer)

- Libbitcoin Server 3.6.0: +9 hours, 46 min (22% longer)

As we can see, each of the 4 implementations that have made code changes in the past year have improved their syncing performance since last year's tests and thus took less than the expected 17% longer to sync. Kudos to BTCD for being "most improved" and shout-out to Gocoin for effectively reaching performance parity with Bitcoin Core!

Exact Comparisons Are Difficult

While I ran each implementation on the same hardware and synced against a local network peer to keep those variables as consistent as possible, there are other factors that come into play.

- Not all implementations have caching; even when configurable cache options are available it’s not always the same type of caching.

- Not all nodes perform the same indexing functions. For example, Libbitcoin Server always indexes all transactions by hash — it’s inherent to the database structure. Thus this full node sync is more properly comparable to Bitcoin Core with the transaction indexing option enabled.

- Your mileage may vary due to any number of other variables such as operating system and file system performance.

Conclusion

Given that the strongest security model a user can obtain in a public permissionless crypto asset network is to fully validate the entire history themselves, I think it’s important that we keep track of the resources required to do so.

We know that due to the nature of blockchains, the amount of data that needs to be validated for a new node that is syncing from scratch will relentlessly continue to increase over time. Thus far the tests I run are on the same hardware each year, but on the bright side we do know that hardware performance per dollar will also continue to increase each year.

It's important that we ensure the resource requirements for syncing a node do not outpace the hardware performance that is available at a reasonable cost. If they do, then larger and larger swaths of the populace will be priced out of self sovereignty in these systems.